How This Project Started

At Softjourn, we often find that the most useful R&D work comes from being embedded in a client's world rather than working alongside it.

Our team was supporting a client who had committed to an AI-forward development culture and was actively building AI tooling into their engineering workflows. Working within that environment gave our team a front-row view of how AI agents perform in a real production context.

With the client's support, one of our senior software engineers set out to bring structure to what was already happening: documenting what worked, identifying where the risks lived, and building a repeatable method from the ground up.

The Challenge

Building and maintaining software on a microservices architecture (a system made up of many small, independent services that communicate with each other rather than one large unified application) creates a particular kind of overhead.

A bug fix, a documentation update, a new round of unit tests — on a microservices platform, each of these touches multiple systems and carries its own time cost. The root cause of a crash might span a dozen services. Test coverage for a single feature, done properly, can consume days. On a complex platform, it all accumulates fast.

Tasks like these were consuming a disproportionate share of developer time on the engagement, time that could otherwise go toward architectural decisions, code review, and higher-complexity problem solving.

The question was whether AI agents could take on this overhead without introducing new problems in the process.

"Integrating AI is no longer optional; it is a critical skill. Refusing to use AI tools today is like choosing to work with a chisel when a rotary hammer is available." – Senior Engineer, Softjourn

Integrating AI is no longer optional; it is a critical skill. Refusing to use AI tools today is like choosing to work with a chisel when a rotary hammer is available.— Senior Engineer, Softjourn

The Solution

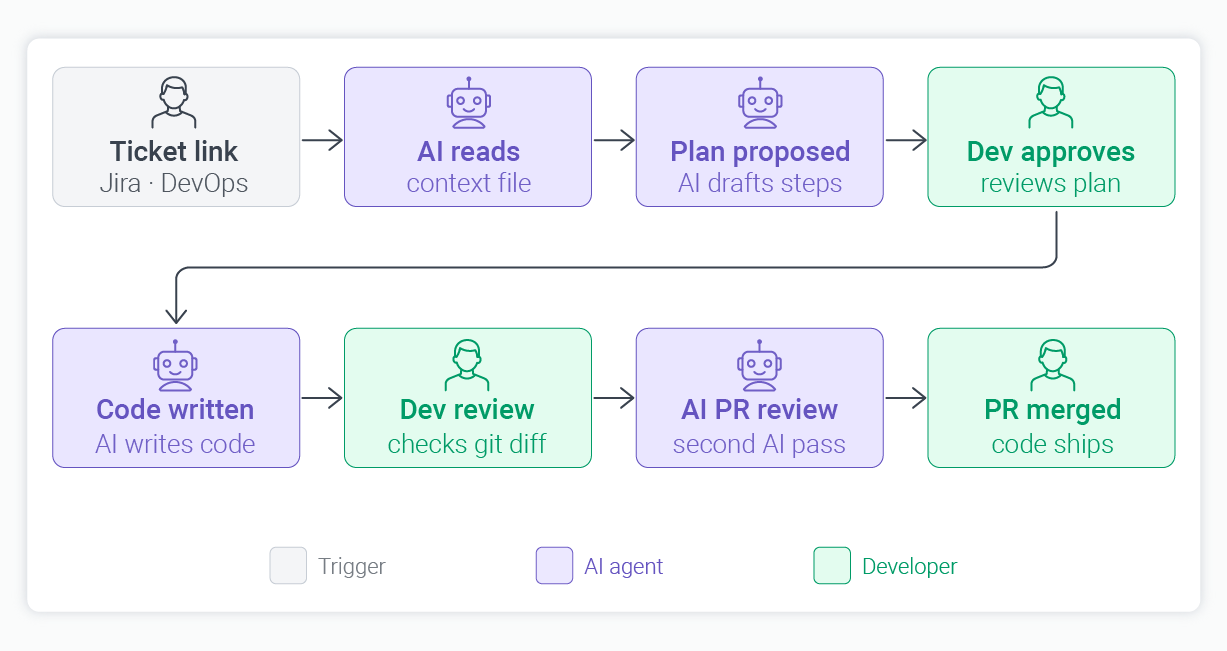

What our senior software engineer built is less a single tool and more a structured method. A few key decisions shaped how the workflow operates.

- A persistent context file in every repository: Rather than re-explaining the project to an AI agent at the start of each session, our engineer introduced an agents.md file: a standing briefing document that lives directly in each codebase. It covers the project's architecture, coding standards, and any framework restrictions the team follows. With that file in place, the daily prompt simplifies to pasting a ticket link. The agent reads the file, reads the ticket, and gets to work.

- Different tools for different tasks: The workflow uses a leading LLM via command line interface for local coding tasks, where the agent needs direct access to open files in the development environment. A separate AI agent handles investigations spanning multiple microservices, since it can navigate across entire repositories and interact with logs and databases simultaneously. Additional AI tools cover documentation and English-language drafting, where they produce the clearest and most natural output.

- Micro-iterations, not large code blocks: After the AI makes a code change, the developer stages it using git (a version control system) to review exactly what changed before moving forward. This keeps changes small and reviewable rather than letting the agent produce large blocks of code in one pass.

- AI reviews AI: Once the code is ready for review, a link to the pull request is sent to a separate AI code review agent for an independent secondary pass. This cross-agent verification step adds a quality assurance layer that has caught issues neither the developer nor the original agent flagged on their own.

The Results

Across the engagement, the productivity improvements were consistent and measurable:

Task | Before | After |

|---|---|---|

Coding (well-defined, clearly scoped tasks) | 1 to 2 days | 1 to 2 hours (up to 5 to 10x on specific tasks) |

Documentation updates | ~1 week | Minutes |

Unit test generation (with edge cases) | 2 to 3 days | Seconds |

The 5 to 10x speedup on individual coding tasks applies specifically to work that is well-defined and clearly scoped upfront. More exploratory tasks, complex feature design, or anything requiring significant architectural judgment will likely see smaller gains.

Beyond raw speed, the qualitative shift in how engineering work gets structured is just as significant. With AI handling boilerplate code generation and documentation, our engineer's focus shifted toward architectural oversight, reviewing logic, and catching what the agent could not know on its own.

One example that stands out: when the team needed to detect circular recursive dependencies (a complex algorithmic problem where services end up referencing each other in a loop, causing crashes), the AI produced an elegant solution in minutes. Working through it manually would have taken significantly longer and required sustained cognitive effort.

What We Learned

Alongside the gains, several situations arose that sharpened how we think about AI in a production development context. Each one led to a concrete process improvement.

Cross-Project Dependencies Need Explicit Documentation

Early in the workflow, our engineer asked the AI to remove two database columns that appeared unused within the service being worked on. The agent did exactly that, correctly following the instructions it had been given.

What it did not have was visibility into four other projects that actively referenced those columns. The result was temporary crashes across multiple services, which the team identified and resolved quickly.

The fix was straightforward: the agents.md file was updated to document cross-project dependencies explicitly, giving the agent full context on any future change of that kind.

The lesson is a useful one for any team adopting agentic workflows: AI executes based on what it knows. Ensuring it knows enough is the engineer's responsibility.

Verify Every Package the AI Recommends

AI agents sometimes suggest software packages (pre-built code libraries) that do not actually exist. Attackers have started naming malicious packages after these common AI suggestions, counting on developers to install them without checking.

The team now verifies package download counts and reviews source code before installing anything the AI recommends. This added step takes minutes and significantly reduces the risk of introducing compromised dependencies.

Security Policy Settings Require Deliberate Attention

AI agents with overly permissive security configurations can potentially assemble problematic code from fragments that look harmless individually. Setting strict security policies on any agent with access to the codebase is a foundational requirement, not an afterthought.

Our team established these guardrails before the workflow began and has maintained them as it continues to evolve.

Client Data Protection is Non-Negotiable

Throughout this workflow, protecting client data remained a top priority. Softjourn maintains strict internal guidelines governing how AI tools interact with client code and information.

These include controls on what data AI agents can access, requirements for validating all outputs before implementation, and clear boundaries on how client code is handled within any AI context. These guardrails were in place from the start.

"Formal training on prompt engineering is obsolete. Developers just need to take it and try, interacting with the AI as if conversing with a colleague. If an agent fails, you iterate." – Senior Engineer, Softjourn

The breakdown below captures where AI assistance delivered the most consistent value on this engagement, and where human judgment remains essential. "Best fit" indicates the highest return with standard review; "Good fit" means solid results with some additional validation recommended:

Task Type | AI Suitability | Notes |

|---|---|---|

Coding | Best fit | Boilerplate and feature code; speed gains most consistent on well-defined, clearly scoped tasks |

Documentation | Best fit | Near-instant updates via connection to the project management platform; high accuracy |

Unit test generation | Best fit | Comprehensive boundary and edge-case coverage produced in seconds |

Cross-service debugging | Good fit | AI navigates multiple repositories and logs to trace root causes |

Complex algorithm design | Good fit | Architectural solutions produced in minutes versus hours manually |

High-stakes system changes | Human review required | AI lacks implicit cross-project dependency knowledge; always verify scope before executing |

Where Else Could This Approach Apply?

The workflow described here addresses a specific kind of engineering overhead, and that overhead is not unique to this engagement. Any team running complex, service-based software will recognize the same patterns.

Regulated and Compliance-Heavy Industries

Event ticketing, healthcare technology, insurance platforms, and financial services all carry significant documentation requirements and compliance obligations. A workflow that reduces documentation time from days to minutes multiplies quickly across a large product team, and the depth of edge-case test coverage that AI adds is especially valuable where missed scenarios carry regulatory or operational risk.

Fast-Scaling Product Teams

Startups growing quickly often accumulate documentation debt. AI-assisted workflows help lean teams maintain test coverage and documentation standards that would otherwise fall behind during high-growth phases.

Enterprise Software Modernization

Teams migrating from legacy systems to modern architectures generate substantial documentation work as they map existing functionality to new structures. AI agents fed the existing codebase can scaffold much of this and give engineers a starting point rather than a blank page.

In each case, the core advantage is the same: AI handles the repeatable, time-intensive work, freeing engineers to focus on the decisions and oversight that require their expertise.

Conclusion

What our team built within this client's environment is not a shortcut. It is a disciplined method for working alongside AI rather than around it. The productivity gains are real and well worth pursuing, and they come with a clear-eyed understanding of where human oversight matters most.

We are continuing to build on these findings across our teams, refining workflows and developing shared guidance around what AI-augmented development looks like in practice.

For engineering teams looking to understand what a structured AI development workflow can deliver on a real project, we are glad to share what we have learned. Contact Softjourn to start the conversation.