How This Project Started

At Softjourn, innovation does not happen in a vacuum. Our R&D projects are typically rooted in real situations our teams encounter on client work, and this one was no different. While supporting a client in the entertainment technology space, our BA and QA professionals were operating under significant resource constraints: just 10 BA hours per month, and a lean QA capacity that made thorough documentation difficult to sustain.

Rather than accepting those limits as fixed, a small internal team set out to answer a practical question: could an AI framework help close the gap? Specifically, could it reduce the time burden on documentation without compromising quality or introducing new risks?

The result was a structured proof of concept (PoC) using BMAD, the Breakthrough Method of Agile AI-Driven Development, an open-source agent framework built to work alongside software development teams. We ran it across a set of real QA and BA workflows, tracked results rigorously, and documented everything including the parts where the AI underdelivered.

What follows is an honest account of that process.

AI-assisted documentation did not just make our QA team faster. It gave them the capacity to go deeper than the budget would otherwise allow.— Softjourn Team

The Challenge

Tight budgets are a reality on many software projects, and the constraints here were particularly sharp. With BA time capped at 10 hours per month, the team could cover only so much ground. This led to feature documentation falling behind, market research getting squeezed out, and test plans being written at a pace that left edge cases undiscovered and coverage uneven.

QA faced a parallel challenge. Writing thorough test documentation for each major feature is time-intensive work, and when capacity is limited, teams naturally focus on the most critical paths.

That means the less obvious scenarios get skipped. In QA terms, these are called negative edge cases: tests that check what happens when something goes wrong, or when a user does something unexpected. For example, a payment that processes twice when a user double-taps a button, or a form that submits successfully but saves nothing to the database. These are exactly where bugs tend to hide, and under tight capacity, this testing may be what gets cut first.

The objective for our team was clear: validate whether AI-assisted tooling could meaningfully improve documentation output and testing depth, without increasing cost or requiring significant new resources. The constraint was equally clear: any solution had to work within existing capacity, not around it.

The Solution

BMAD works by analyzing a project's existing codebase and documentation, then using that knowledge to complete structured workflows and generate artifacts.

In plain terms: you point it at what already exists, define what you need, and it produces a working draft. Test plans, edge case checklists, brainstorming documents, market research summaries, product requirement documents (PRDs), and more can all be generated through defined workflow templates.

Our team ran the PoC using BMAD with a leading LLM. Two separate tracks of testing ran in parallel: one focused on QA workflows and one on BA workflows.

QA Workflows Tested

- General test plan creation

- Multi-device or integration testing documentation

- Complex feature scenario testing

- BA Workflows Tested

- Market research documentation

- Feature brainstorming and ideation

- Feature PRD (Product Requirement Document) creation

- UX specification drafting

- PRD analysis

For each workflow, the team ran the task through BMAD, reviewed the output against what an experienced team member would produce manually, and rated it for accuracy, quality, and practical usability. Initial setup and onboarding took approximately 5 hours, after which testing proceeded against live project tasks.

The Benefits

A Strong Case for Adoption for QA

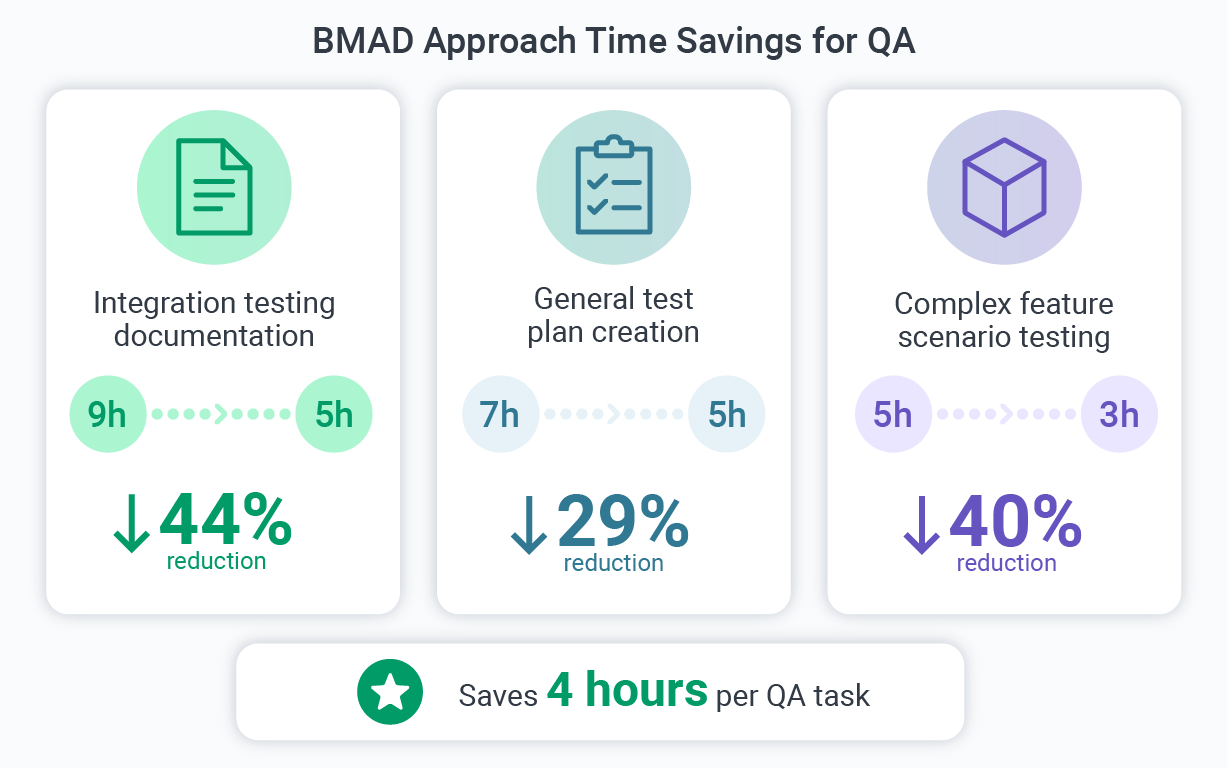

Across every QA scenario we tested, BMAD delivered consistent time savings. Overall, documentation finalization time dropped by 25 to 30% compared to working manually. On specific tasks, the reduction was even more pronounced:

- Integration testing documentation: reduced from 9 hours to 5 hours, a 44% reduction

- General test plan creation: reduced from 7 hours to 5 hours, a 29% reduction

- Complex feature scenario testing: reduced from 5 hours to 3 hours, a 40% reduction

Across major QA feature documents, the tool reliably saved approximately 4 hours per task. On a project running five major features through QA in a single quarter, for instance, that translates to roughly 20 hours of capacity returned to the team, time that can go toward deeper testing, additional coverage, or other delivery work.

Beyond the time savings, quality held up well. The AI-generated artifacts included negative edge cases and extended test scenarios that would likely have been skipped under manual time constraints.

In effect, BMAD expanded what the QA team could cover, not just how fast they could cover it. Documentation was also created from scratch for features that previously had none, addressing a meaningful backlog built up by ongoing budget limits.

Our team's assessment was direct: AI-assisted documentation did not just make QA faster. It gave the team the capacity to go deeper than the budget would otherwise allow, generating the edge case scenarios and extended test coverage that typically get cut when time is short.

Only Useful in the Right Contexts for BA

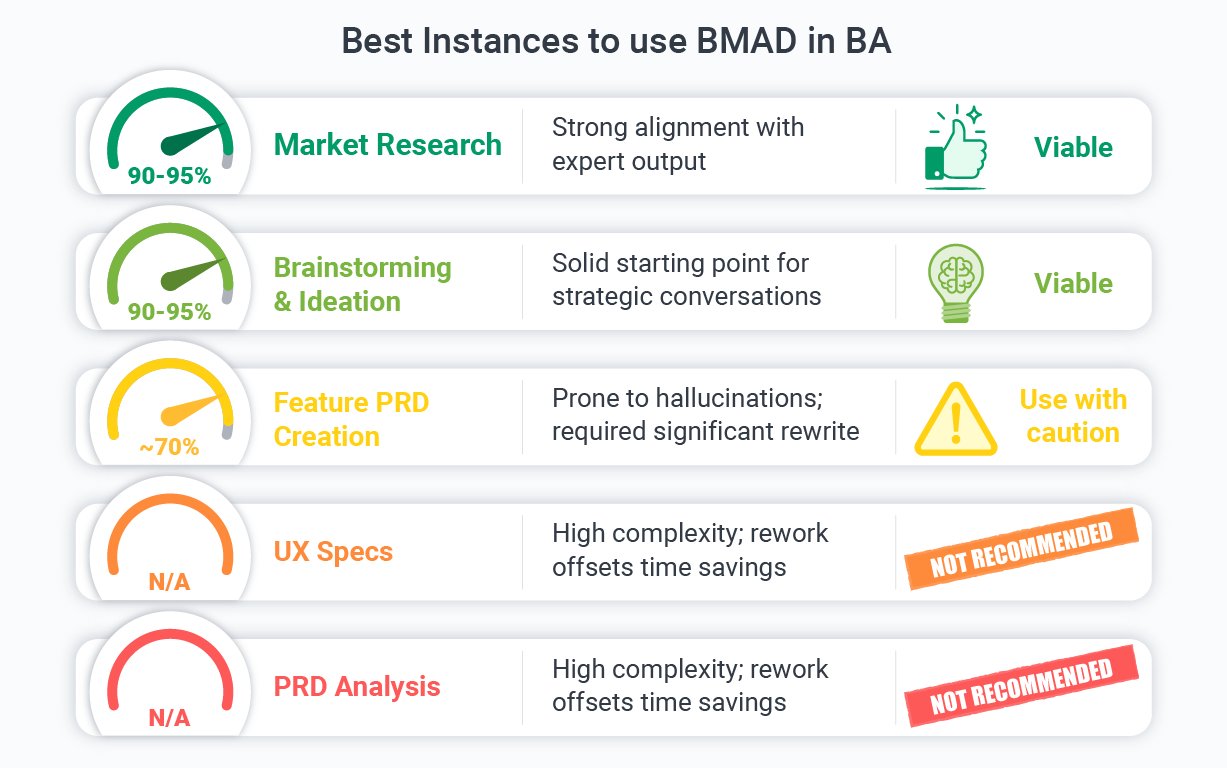

The BA results told a more layered story, and we think that nuance is worth sharing directly.

For market research and brainstorming tasks, BMAD performed well, reaching 90 to 95% alignment with expert-produced output and saving between 1 and 1.5 hours per task. These documents were reliable enough to use as working material with minimal cleanup.

Feature PRD creation was a different story. While BMAD reduced drafting time dramatically from roughly 24 hours manually down to approximately 3.5 hours, accuracy dropped to around 70%.

Some sections required significant rewriting, with approximately 20% of certain documents needing to be rebuilt from scratch due to hallucinations, factual inconsistencies, and logic errors rooted in a lack of project-specific context. UX specs and PRD analysis tasks showed similar challenges, with the rework required largely offsetting the initial time savings.

Our team found BMAD useful for BA "cold starts": getting market research and brainstorming documents off the ground quickly without consuming a large portion of the monthly budget. As one team member put it, it is a great tool for introducing feature changes and adjusting existing documentation, but it requires real vigilance on complex specification work where project context matters most.

The table below summarizes viability findings across all BA use cases tested:

BA Use Case | Accuracy | Summary | Verdict |

|---|---|---|---|

Market Research | 90-95% | Strong alignment with expert output | Viable |

Brainstorming & Ideation | 90-95% | Solid starting point for strategic conversations | Viable |

Feature PRD Creation | ~70% | Prone to hallucinations; requires significant rewrite | Use with caution |

UX Specs | N/A | High complexity; rework offsets time savings | Not recommended |

PRD Analysis | N/A | High complexity; rework offsets time savings | Not recommended |

What We Learned

Running this PoC with discipline meant documenting not just the wins, but the conditions that make AI tooling reliable versus risky. A few things became clear:

Treat Every AI Output as a Draft

BMAD does not produce final deliverables independently. Every artifact it generates requires review and, depending on complexity, meaningful revision. Teams that skip this step risk introducing logic errors or inaccurate requirements into their workflows.

Domain Expertise is Not Optional

The accuracy gap in complex BA documents came down to a single factor: the model lacked project-specific context that a human analyst carries naturally. A QA engineer or BA reviewing outputs needs enough domain knowledge to catch what the model gets wrong, particularly in specifications where a plausible-sounding error can be harder to spot than an obvious one.

Maintenance is Part of the Equation

AI-generated documentation degrades if it is not regularly reviewed and updated. On projects with limited BA and QA time, building a review cadence into the workflow is essential. Without it, the initial efficiency gains erode over time as documentation drifts out of sync with the product.

Pattern-Driven Tasks Benefit Most

Across both tracks, the clearest predictor of success was task structure. Repeatable, well-defined workflows (test plans, checklists, research summaries) performed reliably. Open-ended or highly context-dependent tasks (full PRDs, UX specs) exposed the model's limitations. Knowing where that line falls is as important as the tooling itself.

Where Else Could The BMAD Approach Apply?

The patterns we observed in this PoC point to a broader set of situations where AI-augmented documentation delivers real value. If any of these sound familiar, the approach is worth exploring.

Regulated Industries With High Documentation Volume

Financial services, healthcare technology, and compliance-driven platforms require extensive test documentation, audit trails, and requirement specifications. A 30 to 44% reduction in QA documentation time per task multiplies quickly across a large product portfolio, and the depth of edge case coverage that AI adds can be especially valuable in regulated contexts where missed scenarios carry real risk.

Fast-Scaling Startups

Early-stage product teams often carry significant documentation debt. When BA and QA headcount is small, AI-assisted drafting can help a lean team produce and maintain documentation that would otherwise fall through the cracks during high-growth phases.

Platform Migrations and Legacy Modernization

Teams moving from legacy systems to modern platforms generate substantial documentation as they map existing functionality to new architectures. BMAD-style tools, fed an existing codebase and documentation, can scaffold much of this work and give engineers a solid starting point rather than a blank page.

Agile Teams in Sustained Delivery Sprints

When teams operate lean across multiple consecutive sprints, test coverage documentation is often the first thing to slip. An AI drafting layer can keep documentation current without pulling engineers or QA professionals away from active delivery work.

Onboarding and Knowledge Transfer

The BA workflows tested here produced market research summaries and feature brainstorming documents that also double as onboarding material. For teams with frequent turnover or new hires ramping up quickly, AI-generated documentation creates a knowledge base that is easier to produce and maintain than manual documentation alone.

Conclusion

This PoC gave our team something more valuable than a clean set of wins: a clear-eyed view of what AI documentation tools actually deliver right now, and what still requires human expertise to get right. For QA workflows, BMAD earned a strong recommendation. For BA work, the picture is more selective, with real benefits on the right tasks and real risks on the wrong ones.

We are continuing to build on these findings, refining workflows and expanding the range of tasks we test. The goal is not to automate our teams but to give them more capacity where the technology can genuinely support them.

For teams in ticketing, fintech, or any software-driven industry working under similar documentation constraints, we are glad to share what we found and discuss what an AI-augmented approach could look like in practice. Contact Softjourn to start the conversation.